- Welcome to Webmaster Forums - Website and SEO Help.

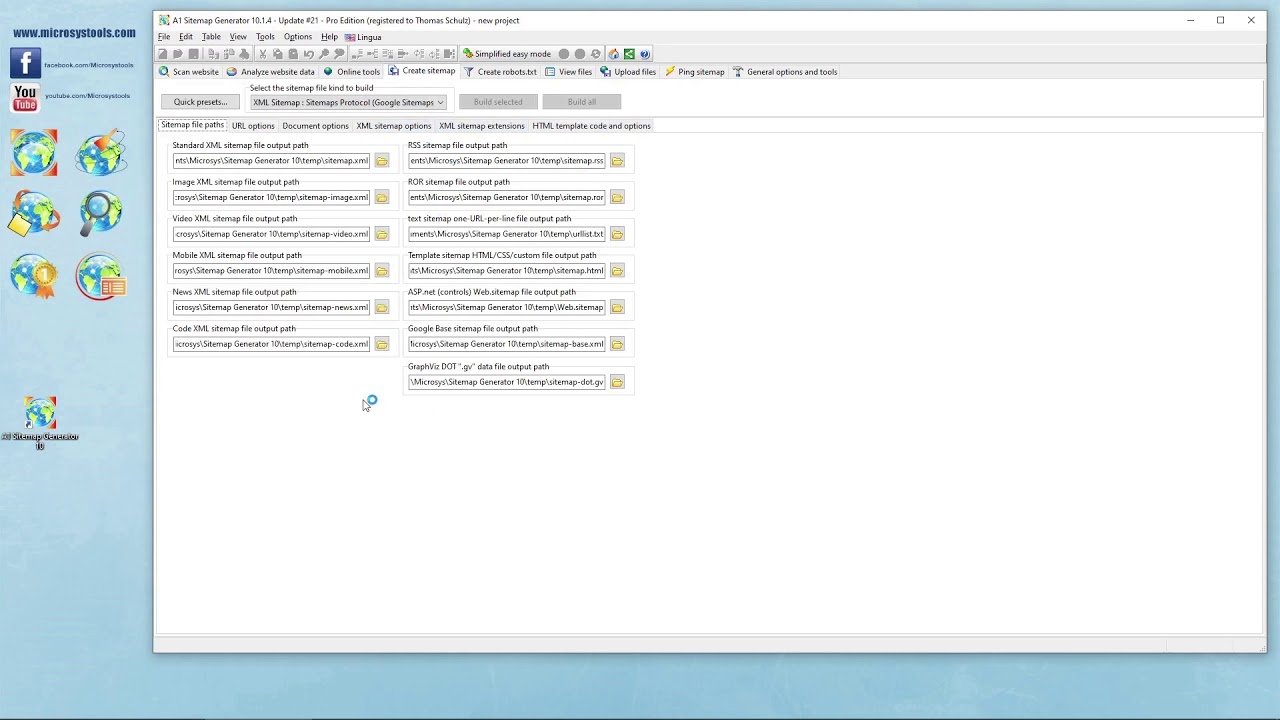

Robot.txt - pretty much empty after 'creation'

Started by cwallace, January 06, 2019, 11:47:04 AM

User actions

Note: Check our video related to keywords in "Robot.txt - pretty much empty after 'creation'" on YouTube.